AI Tools: Data Privacy and Misinformation

Especially since the release of OpenAI's ChatGPT, Artificial Intelligence (AI) has been a constant hot topic. Its use in everyday work can optimize many processes and offers high potential, particularly in software development. But this new technology should not be used without caution. In the following article, we would like to discuss how incorrect information is generated and why data protection is important in AI.

How AI Works and How Misinformation Is Generated #

The most well-known flaw of current AI tools is probably that they very often generate output that is not true or that is confusing. These occurrences are sometimes so entertaining that someone has dedicated a GitHub repository to such results.

At first glance, such incorrect or even funny results often seem like a citation error, but in fact they are a not so uncommon by-product of AI tools. This is because a tool such as ChatGPT is not a search engine like Google. A search engine scours the web by means of "crawlers" and ranks certain websites according to certain criteria in order to display the websites relevant to the user:s query as high up as possible in Google. For example, the authority and credibility of information is "confirmed" by links and references from other websites.

However, when we make a request to ChatGPT, the technical basis is much different. Generative AI tools are in fact based on Large Language Models (LLMs for short), whose task is to understand human language and predict content as correctly as possible. These are trained like an athlete before a competition, but not with fitness exercises, but with the processing of mostly public information and interactions with human trainers. These human trainers are, on the one hand, the developers and testers behind the tools, who give feedback to the system about whether something has been a good or bad answer. But we, the users, also train the AI with every chat and thus influence its future output.

As an example, let's take the simple sentence starter: "The traffic light changes from red to ...". If an AI is well trained, it will most likely suggest something like yellow, since most texts and most humans trainers who train the AI would probably define that as the most appropriate answer. However, let's assume that a large proportion of human users give as feedback that yellow is not right at all and rather something else such as purple should be the result. This is of course not completely accurate, but the AI "believes" the user and re-evaluates its LLM, making it more likely that the sentence will end with "purple" rather than "yellow".

This is a very common process behind algorithms, but of course it can have devastating effects on the credibility and truthfulness of the AI. In such cases, one also speaks of "model poisoning".

3 Tips for Responsible Use of AI Chatbots #

With this knowledge, you can probably be a little more forgiving of AIs when the answers aren't so satisfactory after all. To avoid losing your fun with AI tools in the process, here are a few tips on how to use them responsibly:

Fact-Checking #

Use AIs as an assisting tool, but never as the final authority. Check the results and question the answers.

Provide as Much Detail as Possible #

An AI will throw back general questions with general answers. If you want to get a nice answer, describe your problem as detailed as possible. This way, outputs that make more sense will come back more often.

Feedback #

An AI is worth nothing without training data. And that comes from you as well. So tell the AI when it is right and especially when it is wrong. This will benefit future queries.

The Difficulties Between Data Control and Autonomy #

When we ask an AI something, we can assume that this content will be cached and processed on its server. Whether this content ultimately remains on the server after the request, e.g. to collect personal data and use it for advertising purposes, depends purely on the AI developer:s and their privacy policy. The global pioneer OpenAI specifically states that they do not use this data for commercial purposes and, above all, that they treat it in a GDPR-compliant way. However, this need not always be the case, because it would be possible that the data could also be used to create user profiles. For example, Google formulates the privacy statement for its in-house chatbot Bard very vaguely:

Google collects your Bard conversations, related product usage information, info about your location, and your feedback. Google uses this data, consistent with our Privacy Policy, to provide, improve, and develop Google products and services and machine learning technologies, including Google’s enterprise products such as Google Cloud. (As of September 19, 2023)

Since Google's product range is very diverse, we as end users do not know exactly whether the data is only used to optimize the AI systems or also for Google's personalized advertising services.

What is often unclear with almost all AI chatbots, however, is how and whether previously entered data comes back as output. Despite human control, AI is often an autonomous system that calculates its own responses through probabilities and previous interactions. It is not unlikely that previously stated information will come back in the form of an output. A behavior over which one has little control, even as an AI developer:in. Therefore, one should never disclose personal and sensitive information to an AI. And if this does happen accidentally, make sure to immediately visit the privacy or contact page of the AI operator and send a request to revoke. OpenAI has a dedicated contact form for this purpose.

Our Conclusion #

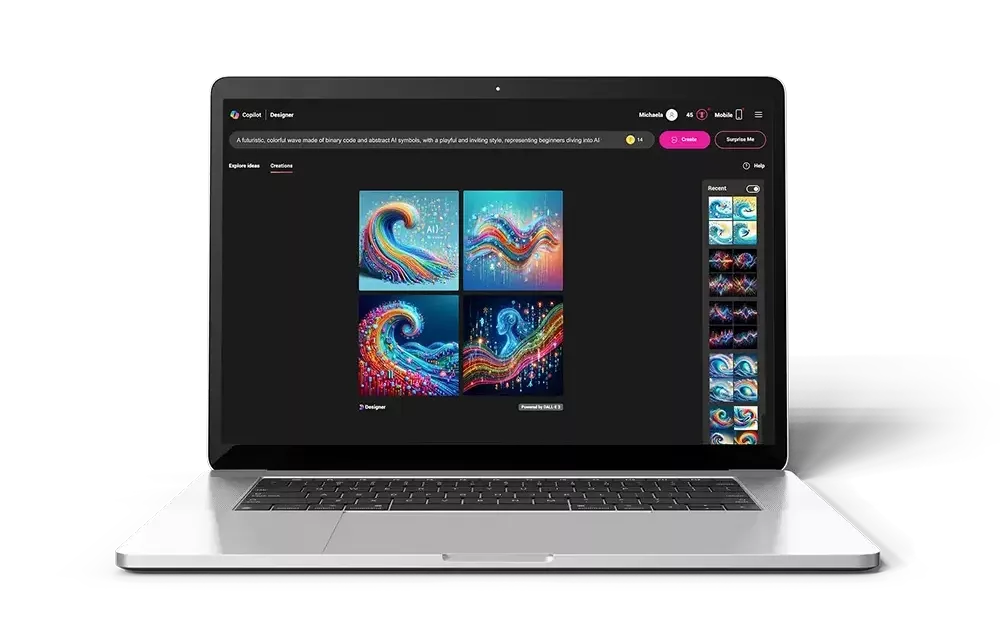

The use of AI tools, especially Large Language Models such as ChatGPT, undoubtedly offers many advantages and potentials to optimize processes and open up new opportunities (here we show an overview of currently popular AI tools in various areas). However, we should be aware of the challenges and risks. Misinformation can arise because AI models are trained on the data provided to them and are influenced by user feedback. As responsible users, we should therefore critically question AI generated data and check the result. Fact-checking and providing detailed information can help AI responses become more accurate and relevant.

In addition, we should be aware of data protection. AI tools store and process our queries, and it's important to know how that data is used. Companies like OpenAI have privacy policies, but this is not always true for all providers. Therefore, we should not disclose personal and sensitive information to AIs carelessly, and if we have concerns, we should immediately visit the privacy or contact page of the provider or operator.

Overall, AI tools can be a valuable addition to everyday work, but we should use them carefully and responsibly to unlock their potential while minimizing the risks. By being highly aware and providing feedback as users, we can help AI systems evolve and maximize their benefits without losing control of our data and the credibility of the information.

Jürgen Fitzinger

Jürgen Fitzinger

Michaela Mathis

Michaela Mathis